No.63

No.63

感情生成のための知能モデルを開発研究

Research and development of an intelligent model for emotion generation

感性ロボットの行動研究

人間と共感し合えるロボットの実現を目指す

Research of Kansei* Robot behavior

Creating a robot that can empathize with humans

* Kansei : Japanese word which has various meaning related with human sense, feeling, affection and emotion.

システム理工学部

徳丸 正孝教授

Faculty of Engineering Science

Professor Masataka Tokumaru

ホテルのフロントで接客するロボット、家族の一員として共に暮らすロボット。ロボットは少しずつ身近なところで見かけるようになった。一方、AIは人間の仕事を奪ったり、さらに進化が進むと人間社会をコントロールしたりするのではと恐れられることもある。AIやロボットと人間はどのように共存し、共栄していくのか。システム理工学部の徳丸正孝教授は、ロボットと人間が共感し合うコミュニケーションの在り方を求めて、研究を進めている。

Robots are slowly becoming a part of our daily lives, whether serving customers at hotel front desks or living with people as part of a family. However, there are fears that artificial intelligence (AI) may negate the need for human employees and with further development, control human society. How can AI and robots coexist and prosper alongside humans? Professor Masataka Tokumaru of the Faculty of Engineering Science is researching to find ways in which robots and humans can sympathetically communicate with one another.

感情豊かなロボットを育てる

AIやロボットを研究されているとお聞きしています。どのような研究でしょうか?

AIの進化によって、今、ロボットは人間の微妙な表情や声の違いから、人間の感情を細かいところまで識別できるようになってきています。そうすると、今度はロボットでも人間のような微妙な感情を表現してみたい。そのために、人間と同じように、いろいろな外的刺激によって、嬉しくなったり、落ち込んだりする「感情生成モデル」の設計に取り組んでいます。

その中で、ロボットと人間がちょっとした会話で、同じような感情を共有する、つまり「共感」を持つようにできないか。あるいは、「怒りっぽい」や「楽天的な」のように、ロボットにもキャラクターごとに違いを持たせて、人間とコミュニケーションができるようになったら楽しいのではないかと考えています。

具体的には、研究は現在どの段階で、どのような実験をしているのでしょうか?

感情生成モデルについては、人間の子どもの感情がだんだん豊かになっていく、教育心理学の発達モデルを参考に、人間とロボットがコミュニケーションを重ねるに従って、その相手に影響されながら、感情の表現も発達していくものを作ろうとしてきました。

現状では、ハードウェアとしてのロボットはまだ感情をうまく表現できません。そのため、パソコン上のバーチャルな世界に、3DのCGで架空の生物のようなロボットを数体設計し、動物の訓練のように、人間がCGのロボットとコミュニケーションをとりながら、動作を教えこむということをしています。CGのロボットにはそれぞれキャラクターがあるので、ロボットごとに訓練の方法を変え、うまく動作ができた時にはロボットも人間も共に喜べるように設計しました。この感情生成モデルによって、ロボットがどのような振る舞いをするかを観察しました。

また、感情の生成と並行して、ロボットが人間と何かを一緒にする時に、お互いに空気を読んで行動できるかを試す実験にも着手し始めたところです。

Developing emotional robots

I understand that you research AI and robotics. Can you tell us more about your research?

Thanks to the evolution of AI, robots are now able to distinguish the smallest details of human emotion based on the differences in subtle facial expressions and voices of humans. We now want robots to be able to express emotions like humans. To achieve this, we are designing an emotion generation model through which robots can express happiness or sadness depending on various external stimuli, similar to humans.

Will robots be able to develop empathy by sharing similar emotions through conversations with humans? It will be interesting to assign short-tempered or optimistic characteristics to individual robots and have them communicate with humans.

What stage of research are you currently in, and what type of experiments are you conducting?

For the emotion generation model, we have been trying to achieve emotional expression by the interaction of humans and robots through repeated communication, similar to the developmental model of educational psychology through which human children's emotions gradually mature.

Presently, robots as hardware are not able to express emotions effectively. We are designing several robots in a virtual world as fictional characters using 3D computer graphics (CG). As humans communicate with these robots, they learn how to move; this approach is similar to training animals. As each computer graphics robot has a unique character, we varied the training method for each robot and designed the experiment so that the robots and humans would both be pleased when the robot is able to perform its actions well. Using this model, we observed the behavior of robots.

In parallel with emotion generation, we have also begun conducting experiments to observe whether robots and humans could anticipate each other's intentions during cooperation.

ドッグトレーニングからヒントを得たスライムロボットの行動調教実験。タイルの光り方に対してどの方向に動くのが正しいのかをロボットは学習していくが、ロボットには気質と感情のモデルが実装されているので、それらのパラメータにより学習の効率(賢さ)と感情表現(素直さや起伏など)が変化する。被験者は、ロボットの気質を理解して調教を行うことによりロボットに親しみを持つようになることが期待できる。(現在環境構築中、今後実験を実施予定)

The image shows a behavioral training experiment for a slime robot inspired by dog training. The robot learns which direction to move in response to the glow of the tiles, but since it is equipped with a model of character and emotion, these parameters change the effi ciency of learning (cleverness) and emotional expression (honesty,emotional ups and downs, etc.). By understanding the robot's character and training it, the participants are expected to become friendly with it. (Currently, the experiment is being prepared and will be carried outperformed in the future.)

ロボットは空気を読んで行動できるか

どのような実験ですか? ロボットも空気を読めるのでしょうか?

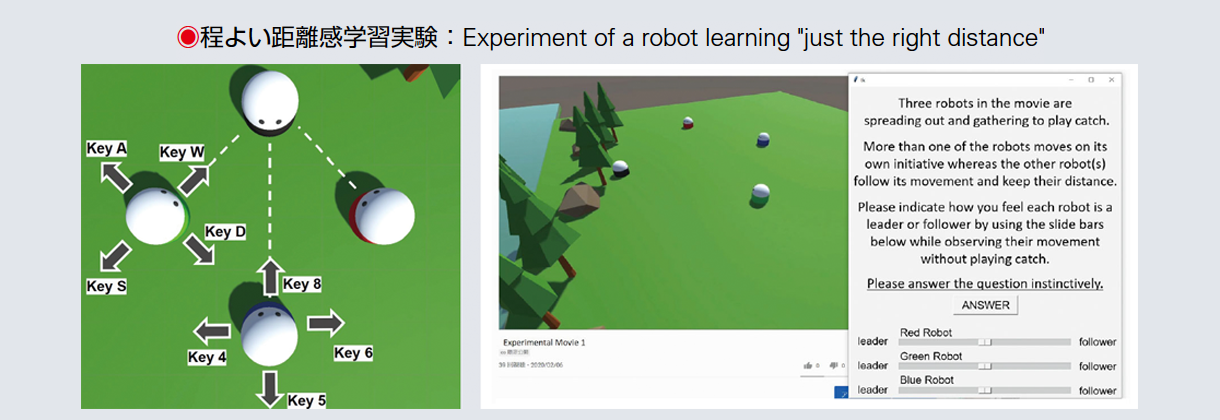

研究室にいる学生の意見で、ロボットと人間がお互いの程よい距離を保つ実験をしたら面白いのではないかと考えました。会話から相手の感情を識別して空気を読むのは非常に難しいですが、物理的な距離だとロボットに与える刺激も複雑でなく、データも取りやすいですから。

まず、バーチャルの世界にCGでロボットを4体用意します。3体は人間がコントロールし、もう1体はロボット自身の判断で動くように設定し、4体でキャッチボールすることにします。人間同士であれば広がりながらもお互いに相手を見ながら、ある程度の距離で止まります。ロボットは他の3体がどれぐらいの距離を保って広がっているかを学習しながら動き、同じ距離できちんと止まってキャッチボールを始めることができました。

この実験はバーチャルの中では完了しており、今はこの研究を実際に人間とロボットが動き回って、集合するときに適切な距離感をつかめるかを確かめる実験を計画しています。このロボットは前後左右に移動できるものを用意し、人間とロボットの位置を検出するVRセンサーをつけ、それぞれの動きはバーチャルの世界にも反映されます。

ただ、実際にどのような場面を想定するかが悩みどころで、「美術館でこの絵についてディスカッションするので、絵の周りに集まりましょう」や「ディスカッションしている輪の中に入りましょう」などの設定を議論しているところです。人が集合しているところに入り込もうとしたら、私たちなら少し間隔を空けますよね。このような人間独特の動きが、実は難しいのです。それをロボットで再現したい。空気を読むって、そういうことです。

Can robots guess intentions and act accordingly?

Can you describe this experiment? Were robots able to guess the intentions?

A student in the lab suggested that it would be interesting to conduct an experiment in which robots and humans maintain an ideal distance from each other. In this scenario, it was difficult to discern other's emotions from conversations and guess intention; however, with physical distance, the stimulus applied to the robots was less complicated and data were obtained more easily.

First, we set up four CG robots in a virtual world. Three robots were controlled by humans, and the fourth robot was set up to move according to its own judgment. All four CG robots then played a game of "catch." The robots controlled by humans would look at each other while separating and stop at some distance from one another. The robot acting on its own was able to observe how far away the three other bodies had moved from one another, and it was able to relocate and stop at the same distance before starting the game.

This experiment was completed virtually. We are currently planning to conduct similar experiments in real life with a human and a robot to observe whether they can establish the correct distance while congregating. The robot will be able to move back and forth and left and right, and it will be equipped with virtual reality sensors to detect the positions of the human and the robot, and each movement will be reflected within the virtual environment.

However, we are struggling with the aspect of setting up a realistic situation for the robot; for example, "We are discussing this painting in a museum, so let's gather around the painting," or "Let's join a circle of people having a discussion." When people congregate, they tend to maintain some space between themselves and others. This uniquely human approach to movement is, in fact, difficult to achieve but it is what I wish to achieve with robots. This is what I mean by "guessing the intention."

集団規範学習モデルによる「程よい距離感」を学習するロボットの実験。1体は動かず、2体のロボットを2名のユーザが操作し、残り1体が学習モデルによる自律制御ロボットである。ユーザが操作する2体と自律制御のロボットは、集まった状態から「キャッチボールを想定して」離れて行き、自律制御のロボットは相互のロボットの距離感を確認しながらリアルタイム学習を行い、距離を取る。この様子を動画で記録し、被験者に各ロボットが距離感に対して他をリードしているか、フォローしているかの印象を調査する。

The image shows an experiment of a robot learning "just the right distance" through a group normative learning model. One robot is immobile, two users operate one robot each, and the remaining robot operates autonomously by a learning model.The two user-operated robots and the autonomous robot move away from each other "as if they were playing catch," and the autonomous robot learns in real time while checking the distance between them. This motion is recorded on video, and subjects are surveyed on their impressions of whether each robot is leading or following the other in terms of distance.

パートナーとしてのロボット

ロボットが身近にある未来をどのように思い描いていますか?

ロボットに対して、道具としての効率性や利便性をとことん追求するのは、それはそれで一つの考え方だと思います。でも、私はあまり役に立たなくてもいいので、ちょっと面白い生き物のようなロボットがいると楽しいかなと思います。

生活のあらゆる場面での私の行動パターンが正解だとAIに教え続けたら、AIは完全に私と同じ活動、思考ができるようになるでしょう。そうすると、私がAIに指示を出せば、面倒なことを片づけてくれるかもしれません。しかし、私はそこにはあまり関心がありません。

ロボットが私のことを気に掛けて語りかけてくるようになると、きっと愛着が湧いてくるだろうし、一緒に作業ができたら楽しいだろうなと思います。AIは単に効率性や利便性を高めるだけのものではなく、もう少し温かみのある関係を築けるものでもあるという方向に持っていけたら。その一つのカギが感情ではないかと考えています。

Robots as partners

How do you envision a future with robots around you?

Developing a robot as an efficient and convenient tool is one approach. However, I think it would be entertaining to have a robot that may not be very useful as such but is simply an interesting creature.

If I continue to train the AI so that my pattern of behavior in every aspect of my life is correct, the AI will do and think completely like me. Accordingly, it may be able to complete tasks that I do not wish to do myself. That is not where my interest lies, however.

If a robot starts talking to me and caring about me, I am sure I will become attached to it. I believe it will be enjoyable to work alongside it. The ideal goal is to create a warm and caring relationship with AI, rather than simply pursuing efficiency and convenience. I believe one of the key aspects of realizing this is emotion.

研究室の学生が実験用に製作したロボット

The image shows a robot built by students in the research group for the experiment.

ロボットは人間をどう変えるか

AIやロボットの研究を通じて気付いたことは何ですか?

私は感情を伴ったAIの開発研究に取り組んでいますが、研究を進めるうちに、そのAIと接した人間の振る舞い方に面白さを感じるように変わってきています。他の研究者とお話ししても、AIやロボットの研究は最終的には人間の研究だということになってくる。それは当然のことで、社会システムの変化に対応した人間の生活の変化の研究にもつながっていくわけですから。インターネットが登場した時には、その視点が欠けていたのかなと思います。

仕事が奪われるとか、人間がコントロールされるとか、映画のように、時としてAIは恐怖の対象として語られることも多い。将来的にそういう可能性がないとは言えませんが、そんな時は私たちがAIとどのように向き合い、関わるのかが問題になってくる。私は「仲良くやろうや」と握手を求めたいですね。

How will robots change humans?

What have you learned in your research on AI and robots?

I have been developing AI with emotions and with the research's progress, my interest has shifted toward observing how human behavior changes while interacting with AI. When I talk to other researchers, I do not find this surprising because the research also leads to studying how human life responds to changes within social systems. I believe that a degree of perspective had been lacking when the Internet was first created.

At many instances, AI is discussed as something to be feared, that will take away people's jobs or control humanity, as it is portrayed in films. This is a future possibility; however, if this happens, it will be important to address how we interact and engage with AI. I would like to be able to metaphorically shake its hand and suggest, "Let's get along."